RDU

Chip Technology

🔷 What is an RDU Chip?

RDU (Reconfigurable Dataflow Unit) chips are purpose-built processors designed specifically for AI inference.

Inference is not just about compute — it is about moving data efficiently, at speed, and at scale.

GPUs move data back and forth between memory and compute.

RDU systems allow data to flow continuously through the chip.

RDU (Reconfigurable Dataflow Unit) chips are purpose-built processors designed specifically for AI inference.

Traditional AI infrastructure has been built on GPU architectures, which are highly effective for training models. However, as AI moves into production, the challenge changes.

Inference is not simply a compute problem. It is a data movement problem.

This eliminates repeated memory transfers and enables:

lower latency

higher throughput

improved efficiency

What does this mean to me.

Less data movement. More performance. Lower energy.

Lower Energy means lower cost

16-chip RDU architecture per rack

~10 kW power footprint per rack

Deployable in standard data centre environments

No hyperscale cooling requirements

Optimised for continuous AI inference workloads

Deployable in Sovereign Environments

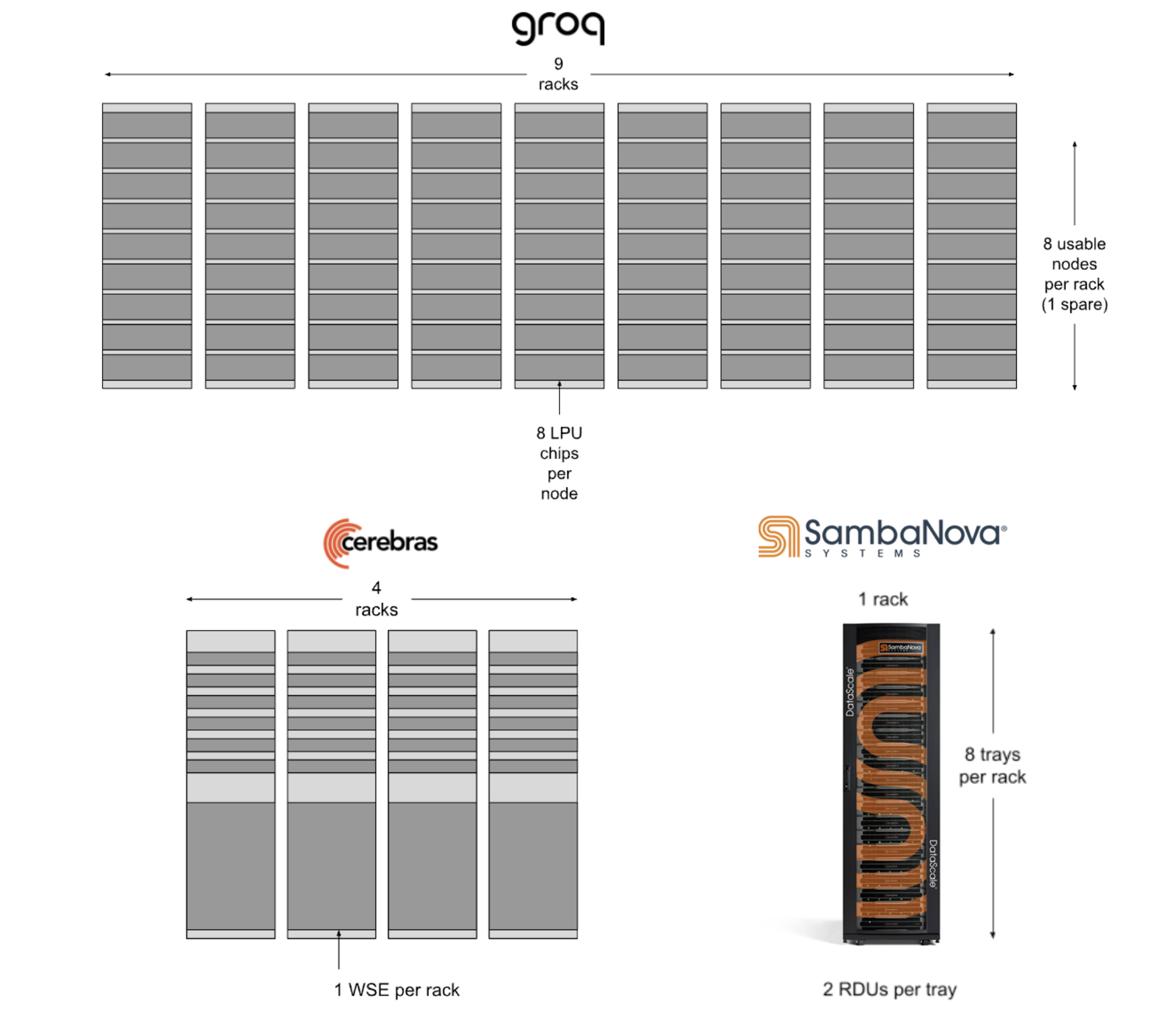

Datacenter footprint comparison for Llama 3.1 70B inference

Source Samabanova Blog click on image for more.

The Shift to Dataflow Architecture

In conventional GPU-based systems, data moves repeatedly between memory and compute. Each step in the process requires additional memory access, increasing both latency and energy consumption.

RDU architecture takes a fundamentally different approach.

Workloads are mapped as a continuous dataflow, allowing data to move through the system once, without repeated transfers back to memory. This eliminates unnecessary overhead and enables more efficient execution.

Speed Without Trade-Off

Traditional systems force a compromise between latency and throughput. Optimising for speed often reduces throughput. Optimising for throughput increases latency. With RDU architecture, this trade-off is removed.

The continuous dataflow model enables both:

fast response times (low latency)

high workload processing capacity (high throughput)

Performance remains consistent even under sustained load, making it well suited to real-time and agent-driven AI applications.

Efficiency at Scale

By reducing data movement, RDU systems deliver significantly improved efficiency.

less energy is required per operation

compute resources are more effectively utilised

performance scales predictably

This results in a lower cost of delivering AI workloads and enables economically viable deployment at scale.

Introducing SN40

The SN40 platform is a production implementation of RDU architecture, designed for real-world AI infrastructure.

Each rack is configured with:

16 RDU chips

approximately 10 kW average power consumption

full-stack software integration via SambaStack and SambaManaged.

What This Means in Practice

With SN40, organisations can:

deploy high-performance AI inference without hyperscale dependency

operate within existing energy and cooling constraints

run multiple models efficiently and continuously

scale workloads with predictable performance and cost

Designed for Real-World Deployment

SN40 is built to operate in standard data centre environments.

It does not require hyperscale infrastructure or liquid cooling, and can be deployed within existing facilities, including power-constrained environments.

This makes it suitable for:

enterprise deployments

regional infrastructure

sovereign AI environments.

ADD Deployment Model

ADD deploys SN40-based RDU infrastructure as part of a broader sovereign AI platform.

This enables:

infrastructure aligned to energy availability

integration with grid and renewable strategies

scalable deployment across distributed environments